Inclusive Privacy and Security

Privacy or security mechanisms are usually designed with the generic population in mind. As such, they often fall short of supporting many under-studied or marginalized sub-populations, such as children, older adults, people with disabilities, activists, journalists, victims of crimes or domestic violence, and people from non-western or developing countries. The goal of this project is to design effective privacy and security mechanisms for people with disabilities, focusing on visual impairments. More broadly, this project aims to pave the way towards ``Inclusive Privacy and Security,'' a vision of designing effective privacy and security mechanism for the widest range of people possible. Our design approach is in part informed by critical race and disability studies and theories such as the concept of intersectionality.

inclusiveprivacy.org

We are currently conducting an ethnographic study on the everyday privacy and security practices of people with visual impairments, a co-design study with people with visual impairments, and a focus group study of people with marginalized identities.

Preliminary work was reported in:

Y. Wang. Inclusive Security and Privacy. IEEE Security & Privacy, July/August 2018.

Accessible Authentication

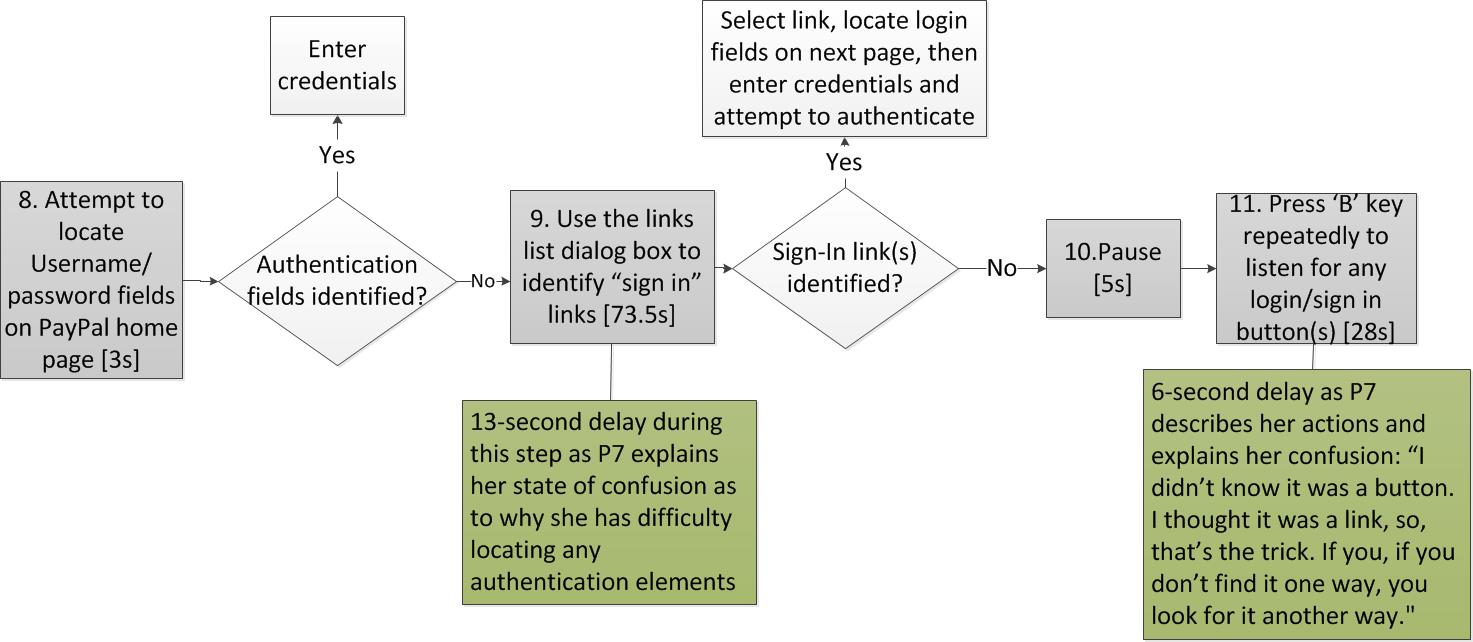

Logging into a device or a website with user names and passwords (i.e., authentication) is an essential everyday computing activity. However, this mundane operation can be daunting for people with disabilities. This project aims to develop and evaluate accessible authentication schemes that a wide range of users can use. Our current work focuses on people with visual impairments.

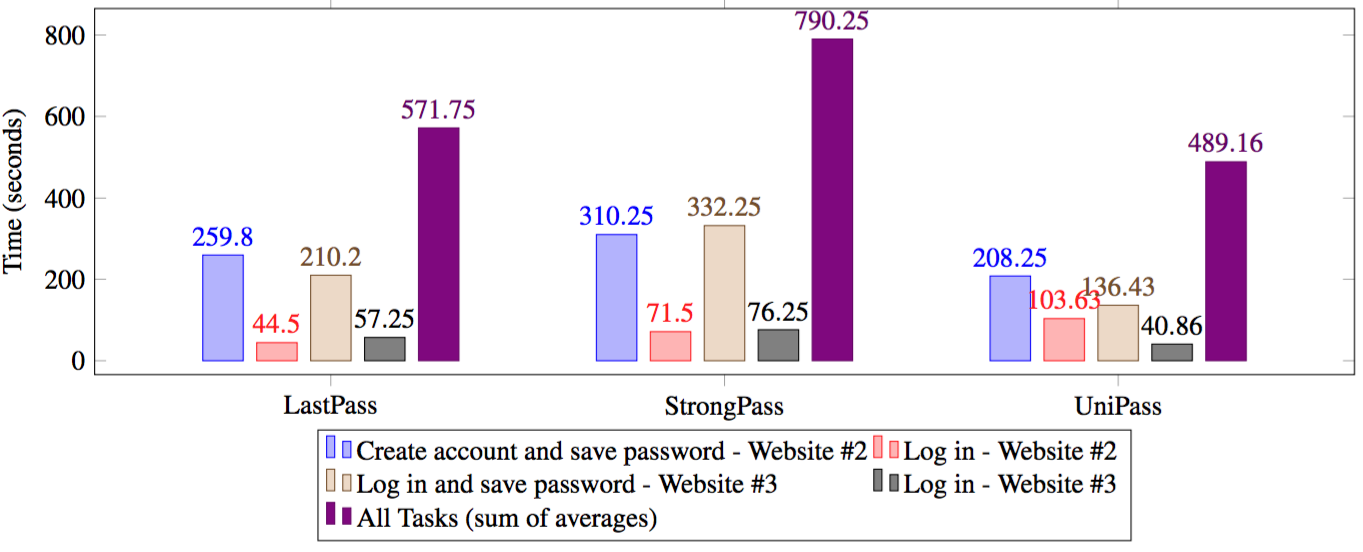

Informed by the aforementioned study, we designed UniPass, an accessible password manager based on a smart device for people with visual impairments. To evaluate UniPass, we tested and compared UniPass with two commercial password managers: LastPass, a popular password manager and StrongPass, a smart device-based password manager. Our study results suggest that UniPass outperformed the other two password managers and that password managers are a promising authentication approach for people with visual impairments.

We filed a patent application based on the UniPass work.

Some results were reported in:

N. Barbosa, J. Hayes, Y. Wang. Towards Accessible Authentication: Learning from People with Visual Impairments. IEEE Internet Computing (Volume: 22, Issue: 2, March/April 2018).

B. Dosono, J. Hayes, Y. Wang. ``They Should Be Convenient and Strong'': Password Perceptions and Practices of Visually Impaired Users. Proceedings of the annual iConference 2017.

N. Barbosa, J. Hayes, Y. Wang. UniPass: Design and Evaluation of A Smart Device-Based Password Manager for Visually Impaired Users. Proceedings of International Joint Conference on Pervasive and Ubiquitous Computing (UbiComp2016).

B. Dosono, J. Hayes, Y. Wang. ``I'm Stuck!'': A Contextual Inquiry of People with Visual Impairments in Authentication. Proceedings of the Symposium on Usable Privacy and Security (SOUPS2015).

Privacy Mirror

Privacy behaviors of individuals are often inconsistent with their stated attitudes, a phenomenon known as the ``privacy paradox.'' These inconsistencies may lead to troublesome or regrettable experiences. This project aims to help people better understand their own privacy preferences and behavior, and make informed privacy decisions in different contexts.

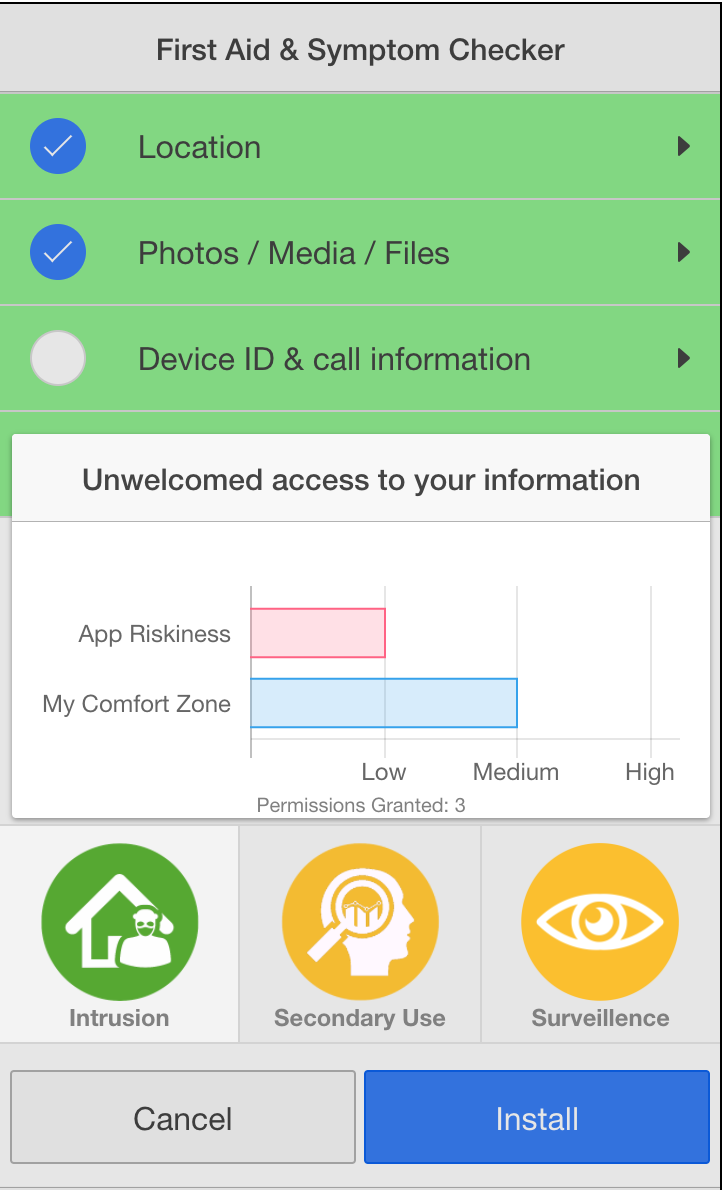

We proposed a personalized privacy notification approach that juxtaposes users' general privacy attitudes towards specific technologies and the potential privacy riskiness of particular instances of such technology, right when users make decisions about whether and/or how to use the technology under consideration. Highlighting the privacy inconsistencies to users was designed to nudge them in making decisions in a way that aligns with their privacy attitudes.

To illustrate this approach, we chose the domain of mobile apps and designed a privacy discrepancy interface that highlights this discrepancy between users' general privacy attitudes towards mobile apps and the potential privacy riskiness of a particular app, nudging them to make app installation and/or permission granting decisions reflecting their privacy attitudes. To evaluate this interface, we conducted an online experiment simulating the process of installing Android apps. We compared the privacy discrepancy approach with several existing privacy notification approaches. Our results suggest that the behaviors of participants who used the privacy discrepancy interface better reflected their privacy attitudes than the other approaches.

Preliminary results were reported in:

C. Jackson, Y. Wang. Addressing Privacy Discrepancy through Personalized Notifications. To appear in the Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies (IMWUT). Oral presentation at UbiComp2018.

Smart Home Privacy

Home has long been considered as a person's castle, a private and protected space. Internet-connected devices such as locks, cameras, thermostats, light bulbs, speakers and toys might make a home ``smarter'' but also raise thorny privacy issues because these devices may constantly and inconspicuously collect, infer or even share information about people in the home.

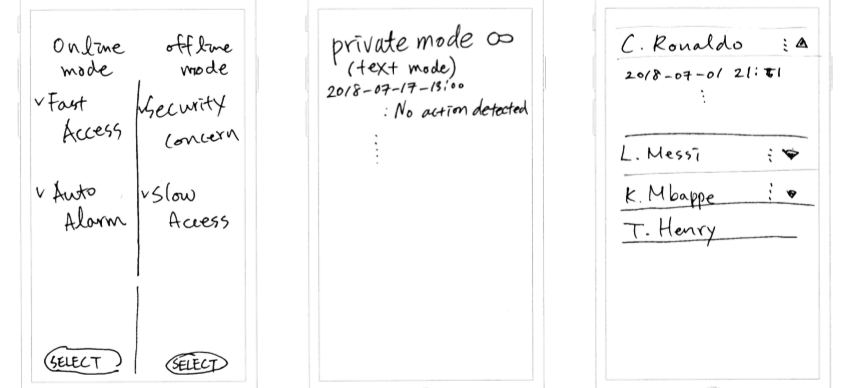

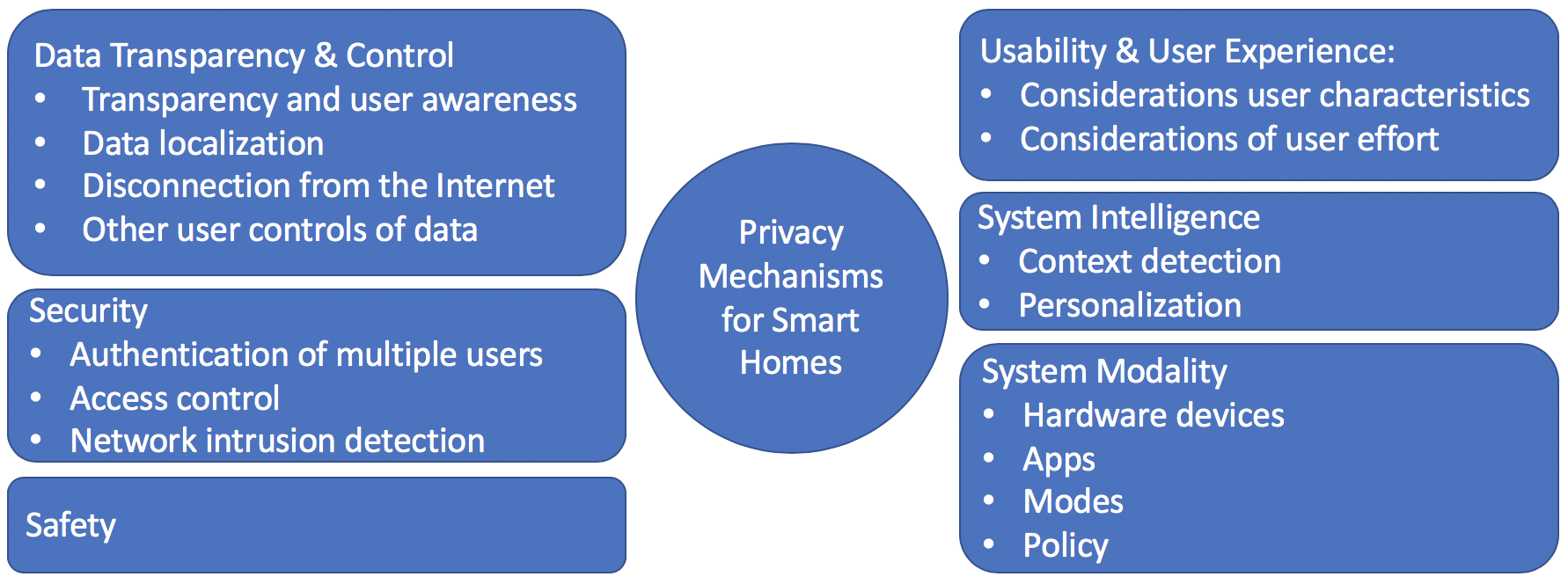

To explore user-centered privacy designs for smart homes, we conducted a co-design study in which we worked closely with diverse groups of participants in creating new designs. This study helps fill the gap in the literature between studying users' privacy concerns and designing privacy tools only by experts. Our participants' privacy designs often relied on simple strategies, such as data localization, disconnection from the Internet, and a private mode.

From these designs, we identified six key design factors: data transparency and control, security, safety, usability and user experience, system intelligence, and system modality. These factors can be used to guide future privacy designs of smart homes.

Preliminary results were reported in:

Y. Yao, S. Kaushik, J. Basdeo, Y. Wang. Defending My Castle: A Co-Design Study of Privacy Mechanisms for Smart Homes. Proceedings of the ACM Conference on Human Factors in Computer Systems (CHI2019).

Drone Privacy

Drones or unmanned aerial vehicles (UAVs) are lightweight aircraft that often carry cameras and can be controlled remotely or operated autonomously. Drones can enable many innovative applications, such as disaster responses, aerial photography and journalism, package delivery, and police investigation. However, because of drones’ small sizes and capabilities in flying and taking high-definition images and videos, government agencies, policy makers, consumer advocacy groups, and legal scholars have raised serious concerns about drones' usage.

While the extant literature suggests that drones can in principle invade people's privacy, little is known about how people actually think about drones. We conducted one of the very first empirical studies on people's perceptions of drones. Our participants raised both physical and information privacy issues against government, organization and individual use of drones. Our participants' reasoning about the acceptance of drone use was in part based on whether the drone is operating in a public or private space. However, our participants differed significantly in their definitions of public and private spaces. I gave an invited talk about this study at the Federal Trade Commission (FTC).

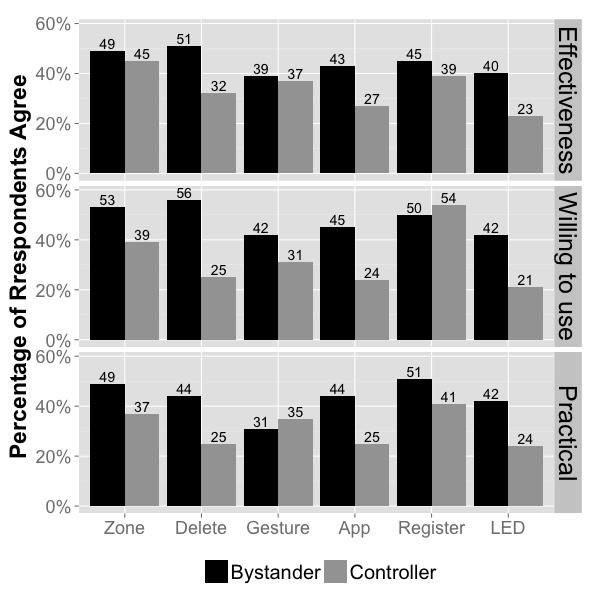

In a follow-up study, our drone controller interviewees expressed less privacy concerns about using drones. Many controller interviewees felt the privacy issues of drones were exaggerated in part because of the media’s sensational coverage of controversial drone uses. These different attitudes between drone controllers and bystanders prompted us to further examine what privacy-enhancing mechanisms they would accept for drones. Privacy mechanisms accepted by both groups are more likely to be adopted in practice. We conducted online surveys testing people's perceptions of eight privacy mechanisms and found that owner registration and face blurring received the most support from both controllers and bystanders.

Results were reported in:

Y. Yao, H. Xia, Y. Huang, Y. Wang. Privacy Mechanisms for Drones: Perceptions of Drone Controllers and Bystanders in the U.S. Proceedings of the ACM Conference on Human Factors in Computer Systems (CHI2017).

Y. Yao, H. Xia, Y. Huang, Y. Wang. Free to Fly in Public Spaces: Drone Controllers Privacy Perceptions and Practices. Proceedings of the ACM Conference on Human Factors in Computer Systems (CHI2017).

Y. Wang, H. Xia, Y. Yao, Y. Huang. Flying Eyes and Hidden Controllers: A Qualitative Study of People’s Privacy Perceptions of Civilian Drones in The US. Proceedings on Privacy Enhancing Technologies (PETS2016).

Crowdsourcing Privacy

Crowdsourcing platforms such as Amazon Mechanical Turk (MTurk) are widely used by organizations, researchers, and individuals to outsource a broad range of tasks to crowd workers. Prior research has shown that crowdsourcing can pose privacy risks (e.g., de-anonymization) to crowd workers. However, little is known about the specific privacy issues crowd workers have experienced and how they perceive the state of privacy in crowdsourcing. We conducted an online survey study of MTurk crowd workers from the US, India, and other countries and areas. Our respondents reported different types of privacy concerns (e.g., data aggregation, profiling, scams), experiences of privacy losses (e.g., phishing, malware, stalking, targeted ads), and privacy expectations on MTurk (e.g., screening tasks). Respondents from multiple countries and areas reported experiences with the same privacy issues, suggesting that these problems may be endemic to the whole MTurk platform.

I gave an invited talk about this study at the Federal Trade Commission (FTC). I also advised Amazon on how MTurk can improve their crowd workers' privacy.

Preliminary results were reported in:

H. Xia, Y. Wang, Y. Huang, A. Shah. ``Our Privacy Needs To Be Protected At All Costs'': Crowd Workers' Privacy Experiences on Mechanical Turk. Proceedings of the ACM (PACM): Human-Computer Interaction: Volume 1: Issue 1: Computer-Supported Cooperative Work and Social Computing (CSCW2018).

Y. Huang, C. White, H. Xia, Y. Wang. A Computational Cognitive Modeling Approach to Understand and Design Mobile Crowdsourcing for Campus Safety Reporting. International Journal of Human-Computer Studies, Volume 102 Issue C, June 2017.

Y. Huang, C. White, H. Xia, Y. Wang. Modeling Sharing Decision of Campus Safety Reports and Its Design Implications to Mobile Crowdsourcing for Safety. Proceedings of the International Conference on Human-Computer Interaction with Mobile Devices and Services (MobileHCI 2015).

Y. Wang, Y. Huang, C. Louis. Towards a Framework for Privacy-Aware Mobile Crowdsourcing. In Proceedings of the 5th ASE/IEEE International Conference on Information Privacy, Security, Risk and Trust (PASSAT 2013)

Online Behavioral Advertising (OBA) and Privacy

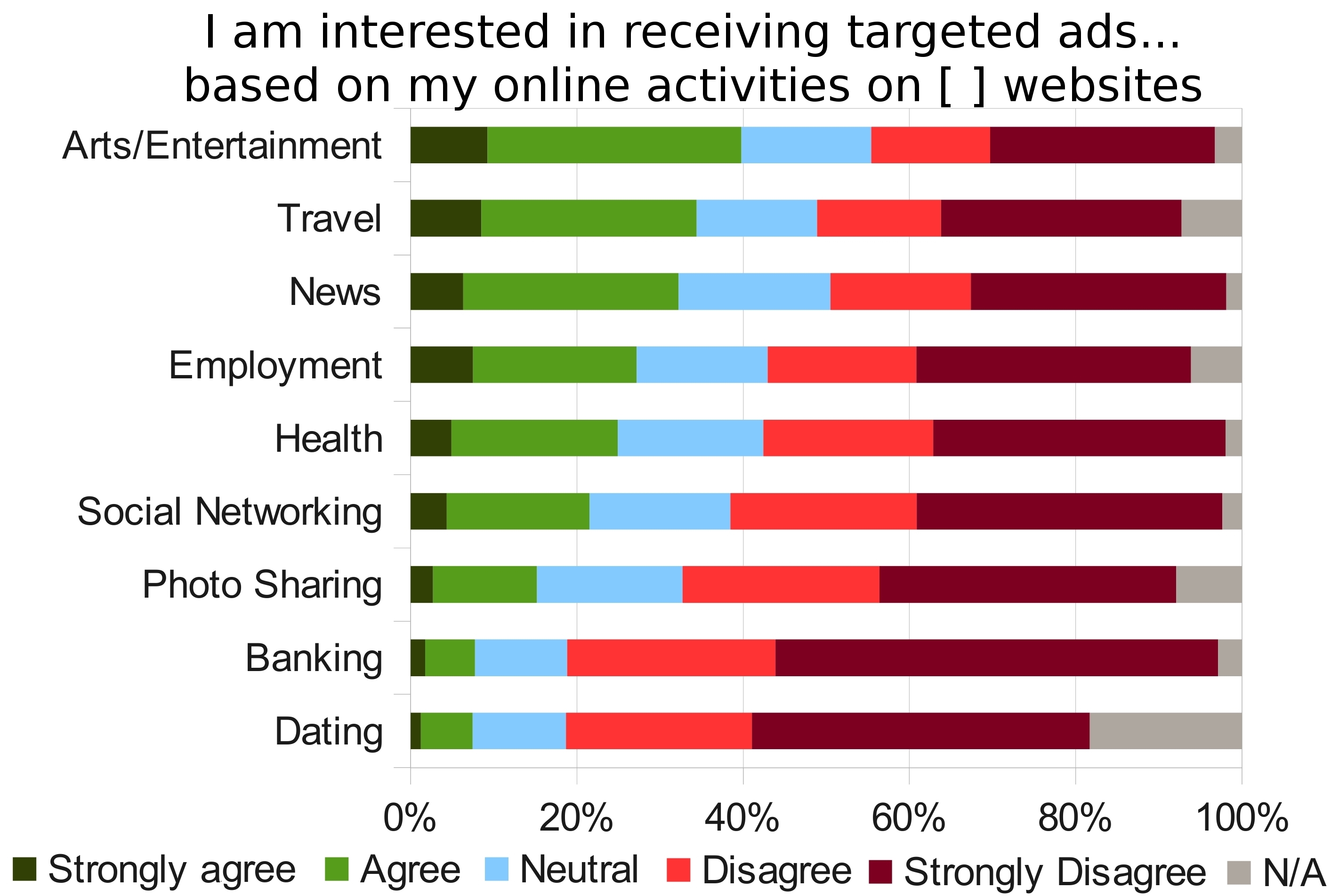

Online behavioral advertising (OBA) is ``the practice of tracking an individual's online activities in order to deliver advertising tailored to the individual's interests.'' OBA is prevalent and has raised major privacy concerns. My CMU collaborators and I conducted a series of studies on people's perceptions and preferences of OBA. For instance, participants in our interview study found OBA to be simultaneously useful and privacy invasive. To study the effect of properly configured industry privacy tools on limiting OBA, we conducted an Internet measurement study and found that some tools can, to some extent, limit OBA while others cannot. However, when we tested the usability of nine major industry OBA privacy tools in a lab study, we found serious usability flaws that hindered people's abilities to correctly configure all of the tools we tested. This work provided important empirical evidence that the industry self-regulation was not working for ordinary people.

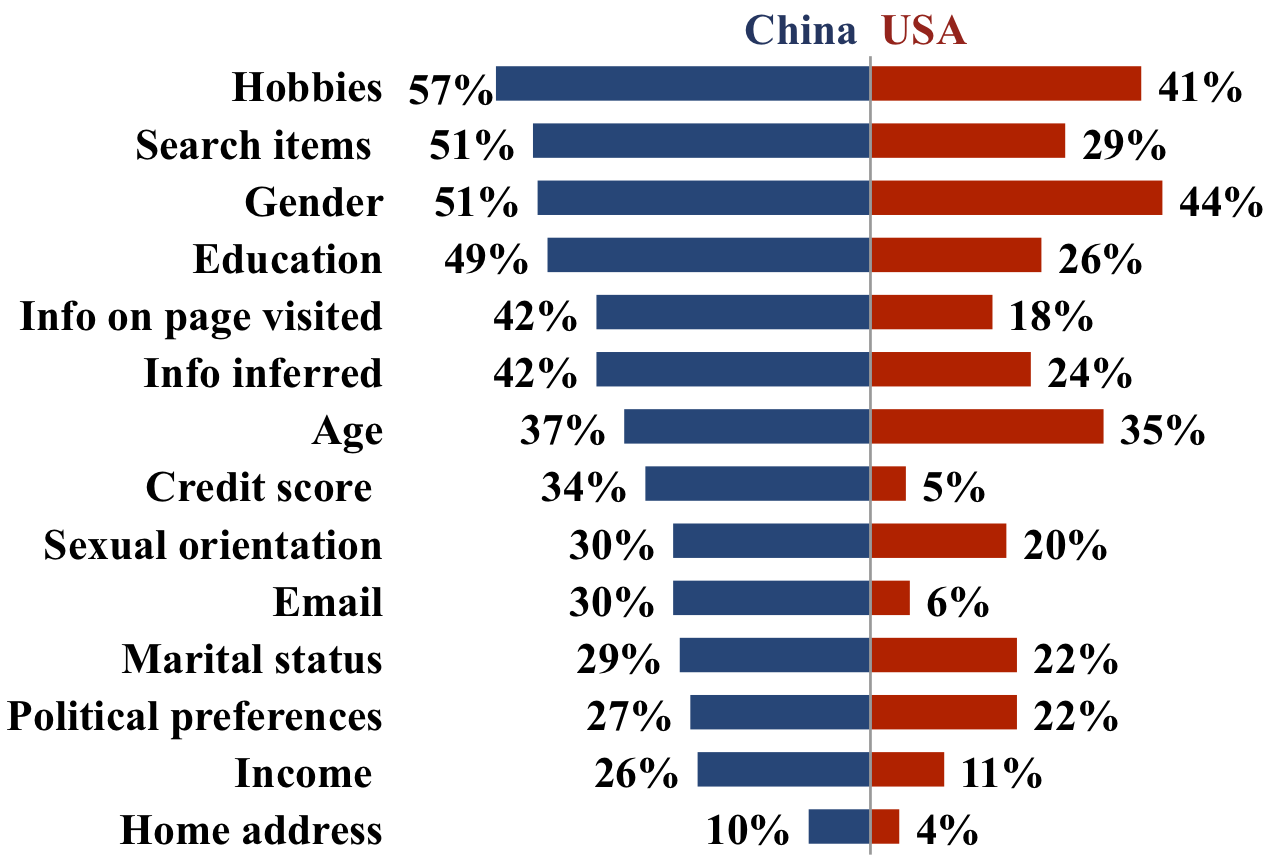

Since I joined Syracuse University (SU), my students and I have conducted a study to examine whether contextual factors such as country (US vs. China), activity (e.g., online shopping vs. online banking), and platform (desktop/laptop vs. mobile app) would affect people's willingness to share their information for OBA purposes. Our American participants were significantly less willing to share their data and had more specific privacy concerns than their Chinese counterparts. Participants’ OBA preferences also varied significantly across different online activities, suggesting the potential of context-aware privacy tools for OBA.

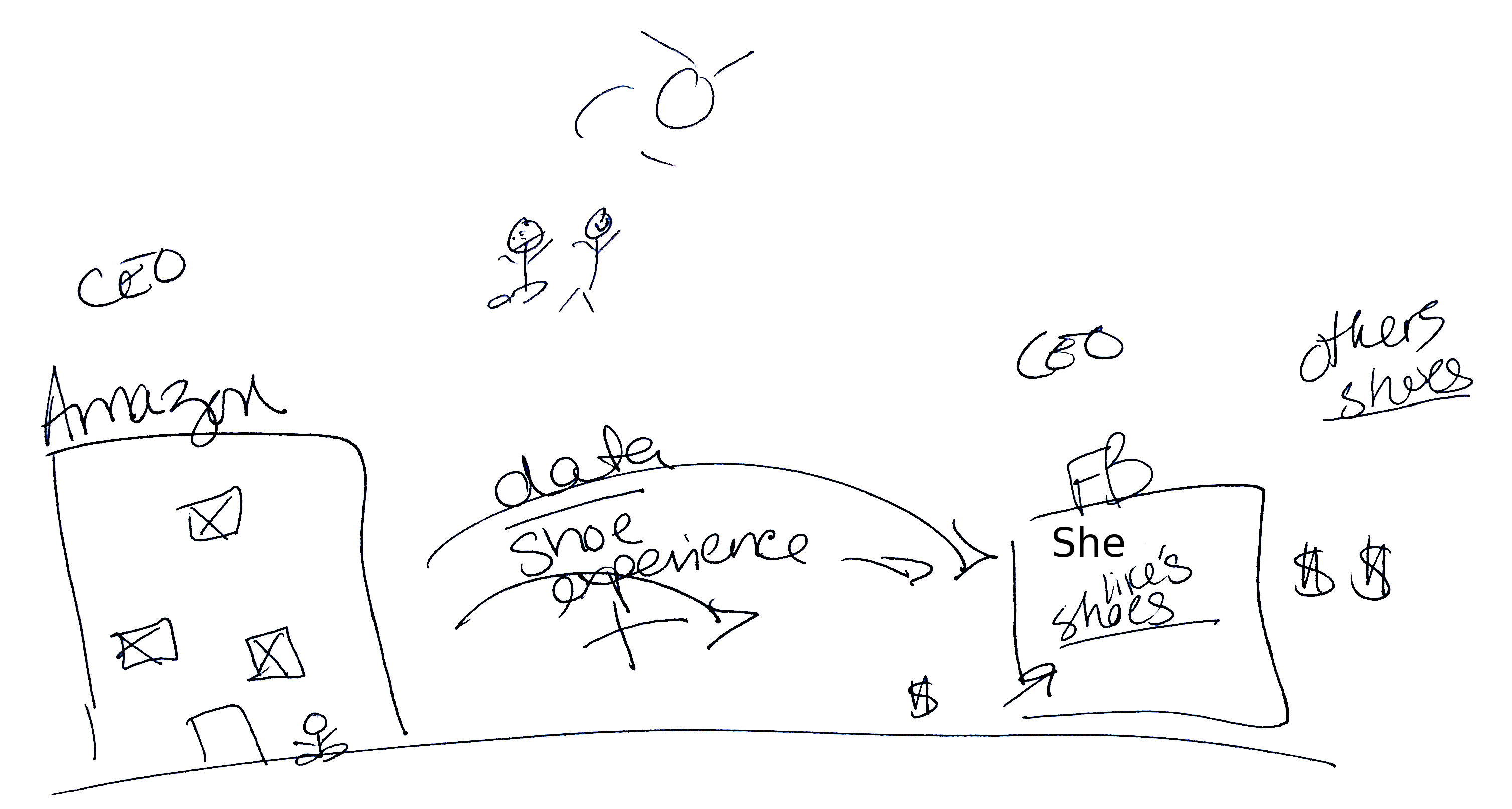

In a follow-up interview study, we examined people's actual understandings of how OBA works. This is an important question because people often draw on their understanding to make decisions. We identified four ``folk models'' held by our participants about how OBA works. We showed how these models were either incomplete or inaccurate. In addition, most of our participants felt that what information being tracked is more important than who is tracking them. This result suggests that an information-based tracker blocking model might be more desirable for Internet users than a tracker-based blocking model used by existing tools (e.g., Ghostery). This result motivates a new direction for tools designed to block online tracking.

I gave an invited talk about this study at the Federal Trade Commission (FTC).

Some results were reported in:

Y. Yao, D. Lo Re, Y. Wang. Folk Models of Online Behavioral Advertising. Proceedings of the ACM Conference on Computer-Supported Cooperative Work and Social Computing (CSCW 2017).

Y. Wang, H. Xia, Y. Huang. Examining American and Chinese Internet Users' Contextual Privacy Preferences of Behavioral Advertising. Proceedings of the ACM Conference on Computer-Supported Cooperative Work and Social Computing (CSCW 2016).

P. Leon, B. Ur, Y. Wang, M. Sleeper, R. Balebako, R. Shay, L. Bauer, M. Christodorescu, L. Cranor. What Matters to Users? Factors that Affect Users' Willingness to Share Information with Online Advertisers. Proceedings of Symposium on Usable Privacy and Security (SOUPS2013).

B. Ur, P. Leon, L. Cranor, R. Shay, Y. Wang. Smart, Useful, Scary, Creepy: Perceptions of Online Behavioral Advertising. Proceedings of Symposium on Usable Privacy and Security (SOUPS2012).

P. Leon, B. Ur, R. Shay, Y. Wang, R. Balebako, L. Cranor. Why Johnny Can't Opt Out: A Usability Evaluation of Tools to Limit Online Behavioral Advertising. Proceedings of ACM Conference on Human Factors in Computer Systems (CHI2012). Best Paper Honorable Mention.

Privacy Nudges

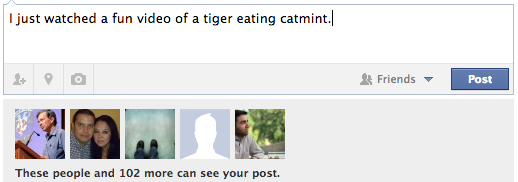

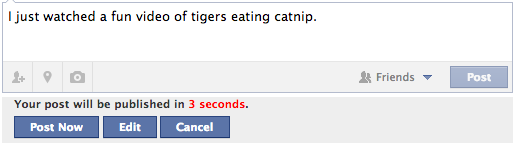

Anecdotal evidence and scholarly research have shown that Internet users may regret some of their online disclosures. To help people avoid posting regrettable content, we drew from behavioral economics and explored a soft paternalistic approach of intervention called

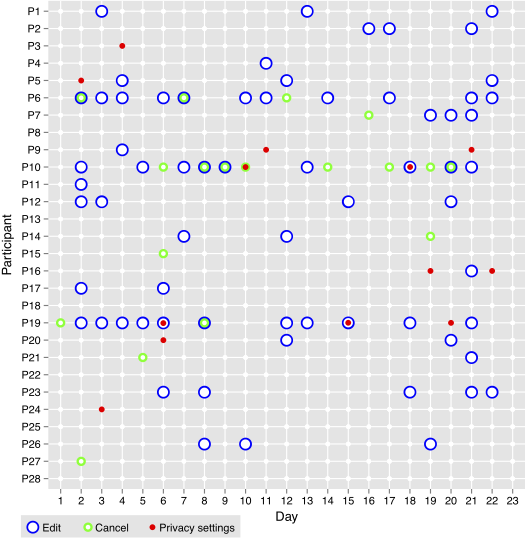

We then ran a series of field trials (lasting 3 weeks to 6 weeks) of the privacy nudges with real Facebook users and interviewed them afterwards. We analyzed participants' interactions with the nudges, the content of their posts, and opinions collected through surveys. We found that reminders about the audience of posts can prevent unintended disclosures without major burden; however, introducing a time delay before publishing users' posts can be perceived as both beneficial and annoying. On balance, some participants found the nudges helpful while others found them unnecessary or overly intrusive. In follow-up work, we presented an extensive literature review on the opportunities and challenges of applying nudges in security and privacy.

Some results were reported in:

A. Acquisti, I. Adjerid, R. Balebako, L. Brandimarte, L. Cranor, S. Komanduri, P. Leon, N. Sadeh, F. Schaub, M. Sleeper, Y. Wang, and S. Wilson. Nudges for Privacy and Security: Understanding and Assisting Users' Choices Online. ACM Computing Surveys, 50(3):44:1-44:41.

Y. Wang, P.G. Leon, L. Cranor, A. Acquisti, N. Sadeh, A. Forget. A Field Trial of Privacy Nudges for Facebook. Proceedings of the ACM Conference on Human Factors in Computer Systems (CHI2014).

Y. Wang, P.G. Leon, X. Chen, S. Komanduri, G. Norcie, K. Scott, A. Acquisti, L. Cranor, N. Sadeh. From Facebook Regrets To Facebook Privacy Nudges. Ohio State Law Journal 74(6):1307-1334.

Multi-National Study of SNS Privacy

Social network sites (SNSs) have become a global phenomenon. Meanwhile, privacy issues in SNS have been hotly discussed in public media, particularly about Facebook in the US media. Despite the steady rise of SNS worldwide, there is still little understanding of SNS privacy in other countries. To the best of our knowledge, this is the first empirical cross-cultural study that focuses on SNS privacy. Several studies have shown that the Internet usage and behavior vary across different cultures (such as in instant messaging, SNS, and online information sharing). We hypothesize that different cultures may affect how SNS users perceive and make privacy-sensitive decisions.

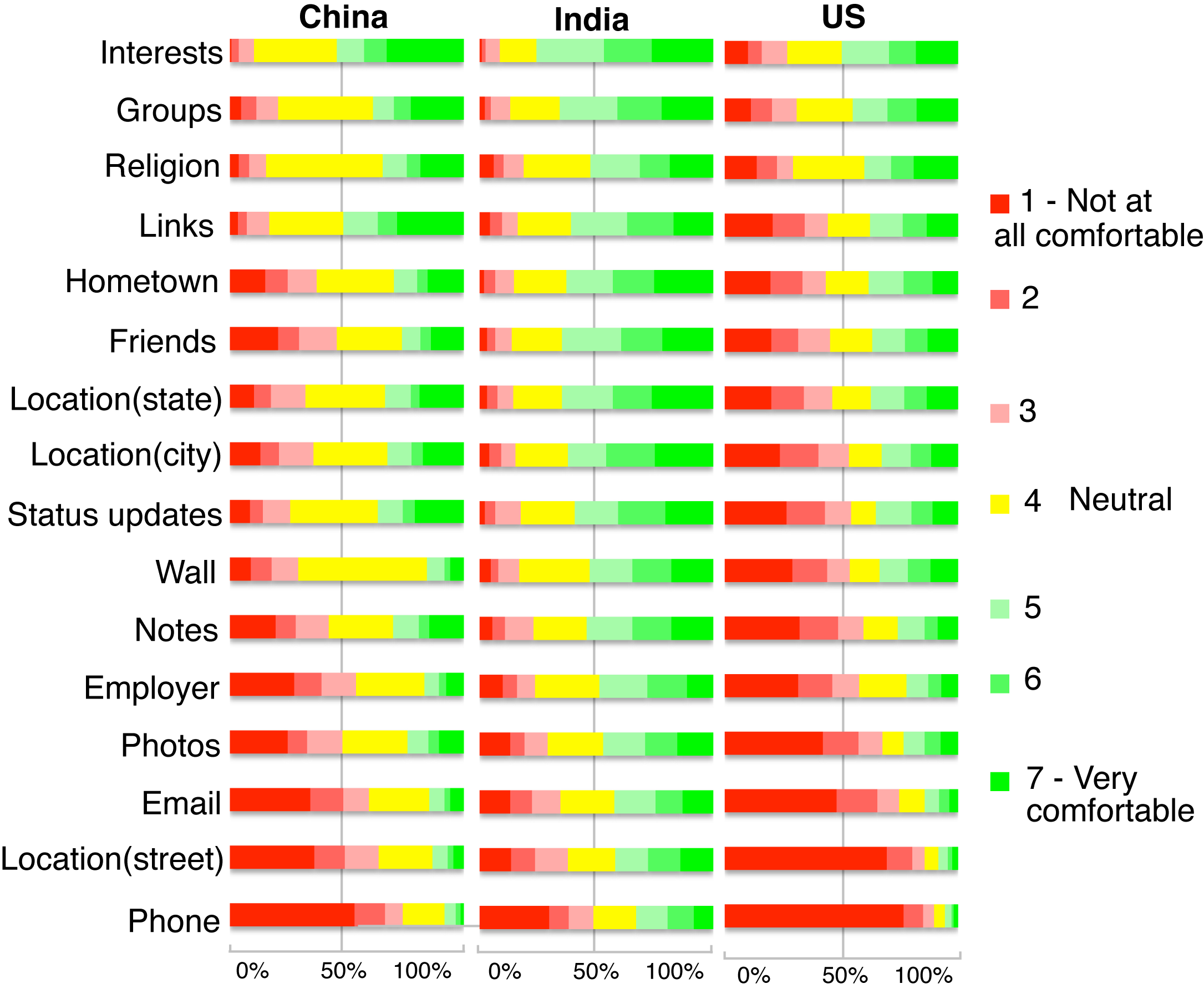

We conducted an online survey study that investigates American, Chinese, and Indian social networking site (SNS) users’ privacy attitudes and practices. Based on 924 valid responses from the three countries, we found that generally American respondents were the most privacy concerned, followed by the Chinese and Indians. However, the US sample exhibited the lowest level of desire to restrict the visibility of their SNS information to certain people (e.g., co-workers). The Chinese respondents showed significantly higher concerns about identity issues on SNS such as fake names and impersonation. The Indian and Chinese samples had much higher percentage of users who prefer targeted ads over untargetted ads. About one third of our respondents found SNS privacy settings hard to use.

We are currently collecting data from France and working on an Arabic version of the survey.

Preliminary results were reported in:

Y. Wang, Y. Li, B. Semaan, J. Tang. Space Collapse: Reinforcing, Reconfiguring and Enhancing Chinese Social Practices through WeChat. Proceedings of the 10th International AAAI Conference on Web and Social Media (ICWSM 2016).

Y. Wang, G. Norcie, L.F. Cranor. Who Is Concerned about What? A Study of American, Chinese and Indian Users’ Privacy Concerns on Social Networking Sites In Proceedings of the 4th International Conference on Trust and Trustworthy Computing (TRUST2011).

Regrets on Facebook

As social networking sites (SNSs) gain in popularity, regrettable stories continue to be reported by news media. In September 2010, a British teen created an event on Facebook to invite a few close friends to her birthday party, but did not mark the event as private. This drew tens of thousands of potential attendees, and attracted local police attention. In another case, a perogie mascot for the Pittsburgh Pirates was fired because he posted disparaging comments about the team on his Facebook page. More recently, a high school teacher was forced to resign because she posted a picture on Facebook in which she was holding a glass of wine and a mug of beer. These incidents demonstrate the negative impact that a single act can have on an SNS user.

In order to protect users’ welfare and create a healthy and sustainable online social enviornment, it is imperative to understand these regrettable actions and, more importantly, to help users avoid them. While there is a large body of SNS literature, we found little empirical research that focuses on the negative aspects of SNS usage. In this work, we examine accounts of regrettable incidents that we collected through surveys, interviews and user diaries. We chose to focus on Facebook because it is a hugely popular SNS with more than 500 million active users. Our aim is to develop a taxonomy of regrets, analyze their causes and consequences, and examine users’ existing coping mechanisms.

Preliminary results were reported in:

Y. Wang, S. Komanduri, P.G. Leon, G. Norcie, A. Acquisti, L.F. Cranor. “I regretted the minute I pressed share”: A Qualitative Study of Regrets on Facebook In Proceedings of the 7th Symposium on Usable Privacy and Security (SOUPS2011).

PEP: Privacy-Enhanced Personalization

Web personalization has demonstrated to be advantageous for both online customers and vendors. However, its benefits are severely counteracted by privacy concerns. Personalized systems need to take these into account, as well as privacy laws and industry self-regulations that may be in effect. Our review of more than 40 national privacy laws shows that when these constraints are present, they not only affect the personal data that can be collected, but also the methods that can be used to process the data.

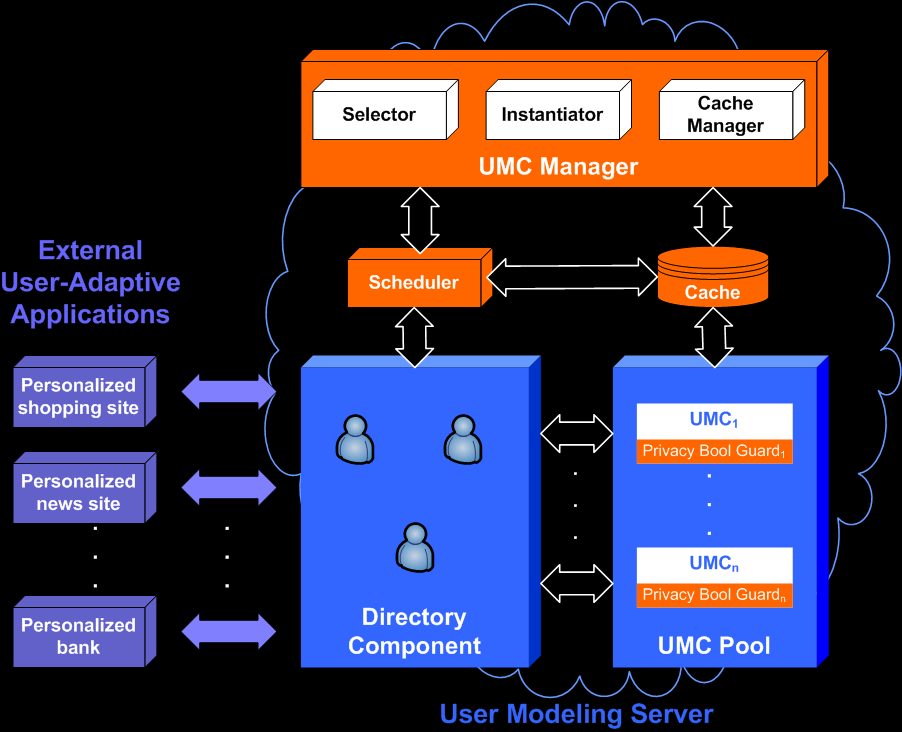

The PEP project aims at maximizing the personalization benefits, while at the same time satisfying the currently prevailing privacy constraints. Since such privacy constraints can change over time, we seek a systematic and flexible mechanism that can cater to this dynamics. We looked at several existing approaches and found that they fail to present a practical and efficient solution. Inspired by the ability of software product line architecture (PLA) to support software variability, we proposed a personalization framework (see the figure below) that enables run-time re-configurations of the personalized system to cater to a user’s prevailing privacy constraints. The mappings between privacy constraints and the system architecture can be hard to model and maintain. Building on the ideas from software configuration management, I modeled these mappings using change sets and relationships that support better traceability and separation of concern. I developed a system prototype based on ArchStudio, an open-source architecture-based development platform.

Our PLA-based approach realizes PEP by instantiating separate personalization systems, potentially one for each user. This puts the system performance and scalability into question. To address this issue, I designed and implemented two optimizations: a multi-level caching mechanism, and a distributed request processing mechanism. Based on my performance evaluation, the combination of these two measures can significantly improve the system performance. With a moderate number of computers in a cloud computing environment, international sites with even the most heavy Internet traffic (e.g., Yahoo) can adopt the PLA-based approach to enable PEP. I am currently also conducting an experiment to assess the effectiveness of this approach from a user’s standpoint. The results will be compared with the findings of a similar experiment conducted by collaborators in Germany. From this, we aim to gain a cross-cultural insight into people’s perceptions and attitudes towards privacy-enhancing techniques.

Prof. Alfred Kobsa, Prof. André van der Hoek, Dr. Eric Dashofy and Scott Hendrickson provide tremendous insights to this project. Related publications can be found here.

Virtual Currency: not so virtual

What happens when the domains of HCI design and money intersect? The field of HCI has long prioritized understanding the contexts within which technologies are adapted and appropriated by their users. Though acknowledging that these contexts often have critical economic aspects (e.g., the “digital divide”), relatively little work in HCI has focused on the significance of money itself as one aspect of user interface and user experience design.

Money is more than just another kind of data. It is a social construct of complex psychological and cultural power. Its use entails connection to wider contexts, not just to “the market”, but also to contested structures of personal and public meaning, like social class and political economy. Moreover, the role of money in online experience and culture is becoming more important with the growth of paradigms such as collaborative community sites and virtual worlds. For example, with banking services being mashed up with social networking (e.g., prosper.com), or virtual worlds being marketed as real economies (e.g., Second Life), what it means to incorporate money into HCI design takes on new and broader relevance.

Thanks to Intel's support, Dr. Scott Mainwaring and I conducted an exploratory ethnographic study of virtual currency (VC) use in China in 2007. There is an estimate of 200 million VC users in China. We sought not only to better understand China’s huge online population as an important market and domain of innovation, but to gain a useful, defamilarized vantage point from which to think more generally about the emerging relationships between human-currency interaction and human-computer interaction.

Based on 5 weeks of fieldwork in four cities in China, our study reveals that how VC is perceived, obtained, and spent can critically shape gamers’ behavior and experience. Virtual and real currencies can interact in complex ways that promote, extend, and/or interfere with the value and character of game worlds. Bringing money into HCI design heightens existing issues of realness, trust, and fairness, and thus presents new challenges and opportunities for user experience innovation. This study was quoted in news media such as BusinessWeek. Related publications can be found here.

MetaBlog: understanding the global blogging community

The weblog, or “blog”, has quickly risen as a means for self-expression and sharing knowledge for people across geographic distance. Inspired by previous studies that show significant differences in technology practices across cultures, we conducted the first multilingual worldwide blogging survey to investigate the influence of regional culture on a blogging community. We asked the research question of whether bloggers are more influenced by their local cultures with respect to their sense of community, or rather whether a “universal” Internet culture is a stronger influence of community feeling.

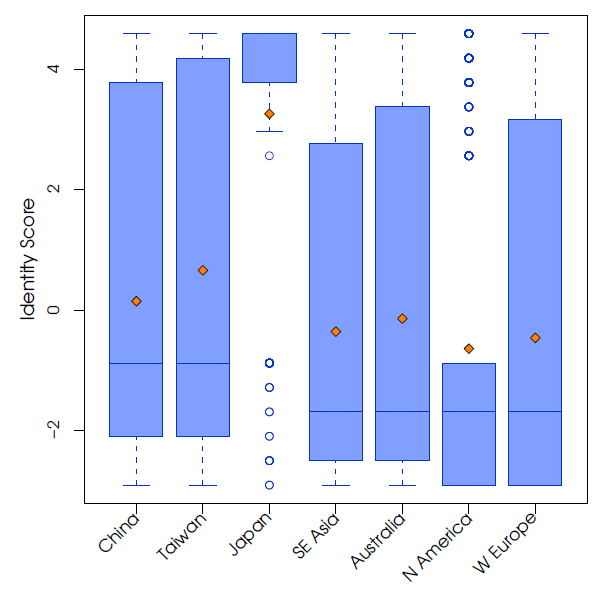

Our results, based on 1232 participants from four continents show that while smaller differences could be found between Eastern and Western cultures, overall the global blogging community is indeed dominated by an Internet culture that shows no profound differences across cultures. However, one significant exception was found in Japanese bloggers and their concealment of identity. Compared to other cultures, the Japanese score was highly skewed towards not revealing identities (see the figure below, courtsey of Norman Su), even with the use of aliases. This presents a paradox in that, on the one hand, Japanese view blogs as an entertainment medium, whereas on the other hand, Japanese express personal matters and are extremely private.

Our research team includes Prof. Gloria Mark, Norman Su, Jon Froehlich, Brandon Herdrick, Xuefei Fan, Kelly H. Kim,Tosin Aieylokun, Louise Barkhuus, and myself. We created a blog for this project and two publications about this project can be found here.

博客调查结果

我们的博客调查的结果发表在两篇国际会议论文中(2005国际社区与技术大会, 2005国际社会智能设计大会)。你可以在下面下载这两篇文章(英文)。简单的说,这两篇论文从以下四个方面比较博客社区:积极性,声誉,社会联系性,身份。第一篇论文比较不同文化背景下的博客社区,第二篇论文比较不同主题类型的博客(政治与个人)。比如,在第一篇论文中,我们调查研究发现日本博客比其他国家的博客更注意隐藏他们的真实身份。在第二篇论文中,我们调查发现政治博客比个人博客具有更强的社会联系性。如果你只想知道一个大概,那么你可以只读论文的介绍和结论部分(就像绝大多数研究人员那样)。

这份问卷调查的准备离不开我们聪明的伙伴们:Jon Froehlich, Brandon Herdrick, Xuefei Fan, Kelly H. Kim,Louise Barkhuus。我们都是Gloria Mark教授的数量统计课的一部份,我们之间的合作十分的愉快。最后,我们特别要感谢帮助我们宣传这个问卷的朋友和所有博客朋友们,你们反馈的意见和建设性的批评使我们受益匪浅。如果你对我们的博客调查有任何的疑问,请先从我们的两篇论文中寻找答案。如果你仍有任何的意见或问题,请直接写电子邮件给我们:normsu or yangwang [at] ics [dot] uci [dot] edu。我们将通过电邮回复你。

海内存知己,天涯若比邻:博客是一个全球社区吗?

作者:Norman Makoto Su, Yang Wang, Gloria Mark, Tosin Aiyelokun, Tadashi Nakano

摘要:基于因特网的信息通讯技术如用户网,网络聊天,多用户网络游戏已经使虚拟社区成为现实。然而,一种新的技术形式,网罗日志(网志或博客)已经迅速成为一种自我表达和跨越地域分享知识的方式。以前的研究主要侧重于西方国家的博客,我们的调查却是面向全球的博客社区。之前的研究发现技术的使用因文化背景而大不相同。在这个发现的启发下,我们做了一个问卷调查旨在探索地域的文化对全球博客社区的影响。我们提出这个研究的问题:是博客所在的地域文化,还是一个“全球化”的因特网文化,会对博客对于博客社区的感受影响大一些?我们在全球范围内开展了一个多语言的网络调查,我们收到了来自全球四大洲的1232个有效回复。我们虽然发现微小的差别存在于东西方的文化下,但是大体的说全球的博客社区却被一个因特网文化所统治,因为没有跨文化的显著差别(注意:这里的差别大小是基于统计意义上的)。然而,一个重要的特例是日本博客特别注重隐藏他们的真实身份。

博客中一如往常的政治

作者:Norman Makoto Su, Yang Wang, Gloria Mark

摘要:近几年来,网罗日志(或称博客)的崛起,正在改变人们在因特网上的交流。政治主题和个人主题的两种博客变得格外的流行。这两种类型的博客都形成了作者与读者的社区。在这篇论文中我们研究社区这个概念是如何在这两种博客中被表达的。我们比较这两种博客在社区方面的区别。我们侧重于有关社区的四个方面:积极性,声誉,社会联系性,身份。我们在全球范围内开展了一个多语言的网络调查,我们收到了来自全球四大洲的1232个有效回复(其中有121个政治博客和593个个人博客)。我们在这两个类型的博客中发现了显著差别(注意:这里的差别大小是基于统计意义上的)。

Usability of Secure Device Pairing Methods: a comparative study

Electronic devices increasingly need to communicate among themselves, e.g., connecting a Bluetooth headset with your cell-phone. However, establishing communication between two devices over a wireless channel is vulnerable to Man-in-the-Middle attacks. Secure device pairing refers to the establishment of secure communication between two devices over a wireless channel. A number of methods have been proposed to mitigate these attacks by leveraging human perceptual capabilities, e.g., visual, to create “out-of-band” authentication channels. However, these methods put various burdens on the users.

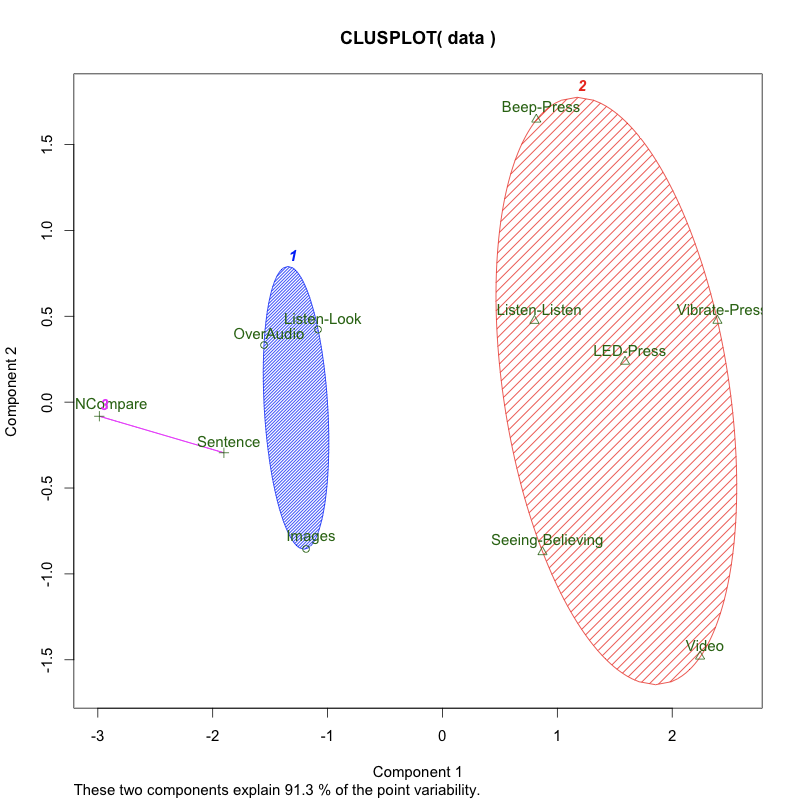

I was a core research member of a comparative usability study that asked users to connect two cellphones using 11 secure device pairing methods. For each method, we took several usability measures such as task completion rate, task performance time, user's perceived security, and System Usabilty Scale (SUS) scores given by users. A cluster analysis based on principal components was performed to determine methods that are closely related with regard on our usability measures. The first component PC1 explains nearly 75% of the variance. We believe that the figure below is the clearest representation of our study's overall results. In it, the two methods in Cluster 3 (PIN- and Sentence-Compare) perform best overall, and the three methods in Cluster 1 (Over-Audio, Image- Compare and Listen-Look) come in as close second. However, viewed in isolation, PIN-Compare stands out against all others. Our subjects' post-experimental ranking of the easiest and hardiest methods matched exactly the ranking along the first principal component of our usability measures. We also identified problematic methods for certain classes of users as well as methods best-suited for various device con gurations. For more details about this study, please take a look at our SOUPS09 paper.

The research team members of this project are Prof. Alfred Kobsa, Prof. Gene Tsudik, graduate students Ersin Uzun, Rahim Sonawalla, and myself.

SocialBIRN: understanding how distributed collaborations work

We are a team that consists of faculty members Prof. Paul Dourish and Prof. Gloria Mark, research scientist Dr. Charlotte Lee (now faculty member at University of Washington), and two PhD students Norman Su and myself. We studied a large scientific collabotory, the Biomedical Informatics Research Network (BIRN), from a social perspective. BIRN consists of a number of physically distributed sites (see the figure below, courtsey of BIRN). We conducted an ethnography study in understanding the role of technologies played in this kind of distrubted collaboration, and more broadly how scientific work is carried out in a distributed, collaborative manner. Norman Su and I wrote a report of suggestions to the fBIRN team.